Machine Learning History

Arthur Samuel first came up with the phrase “Machine Learning”. He was IBM pioneer in the field of computer gaming and artificial intelligence.

Ser Arthur Samuel playing checkers

Ser Arthur Samuel playing checkers

Here is the short history of machine learning.

Table of Contents:

- 1763 Bayes’ Theorem

- 1805 Least Square Methods

- 1913 Markov Chains

- 1950 Turing’s Test

- 1951 First Neural Network Machine

- 1952 Machines Playing Checkers

- 1957 The Perceptron

- 1963 Machines Playing Tic-Tac-Toe

- 1964 Study of the Visual Cortex

- 1967 Nearest Neighbor

- 1970 Automatic Differentiation (Backpropagation)

- 1980 Fukushima’s Neocognitron

- 1982 Recurrent Neural Network

- 1985 NetTalk

- 1986 Backpropagation

- 1989 Recognizing of Handwritten Zip Digit Images

- 1989 Reinforcement Learning

- 1989 Universal Approximation Theorem

- 1995 Random Forest Algorithm

- 1995 Support-Vector Machines

- 1997 IBM Deep Blue Beats Kasparov

- 1997 LSTM

- 1998 MNIST database

- 2002 Torch Machine Learning Library

- 2006 The Netflix Prize Challenge

- 2009 ImageNet

- 2010 Kaggle Competition

- 2011 Beating Humans in Jeopardy

- 2012 Alexnet

- 2012 Recognizing Cats on YouTube

- 2013 Word2vec

- 2014 DeepFace

- 2014 Sibyl

- 2016 Beating Humans in Go

- 2017 Transformers

- 2020 AlphaFold 2

1763 Bayes’ Theorem

Bayes Theorem is a method to determine conditional probabilities. Conditional probability is the probability of one event occurring given that another event has already occurred.

The theorem was discovered among the papers of the English mathematician Thomas Bayes and published posthumously in 1763.

1805 Least Square Methods

Adrien-Marie Legendre discovered the least squares method. The least squares method is used widely in data fitting.

1913 Markov Chains

A Markov chain or Markov process is a stochastic model describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. It is named after the Russian mathematician Andrey Markov.

It is used to predict traffic flows, communications networks, genetic issues, …

1950 Turing’s Test

In 1935 Turing described an abstract computing machine consisting of a limitless memory and a scanner that moves back and forth through the memory, symbol by symbol, reading what it finds and writing further symbols.

The Turing test, originally called the imitation game by Alan Turing in 1950 is a test of a machine’s ability to exhibit intelligent behavior equivalent to humans.

1951 First Neural Network Machine

Marvin Minsky and Dean Edmonds built the first neural network machine, able to learn.

1952 Machines Playing Checkers

Arthur Samuel begins working on some of the very first machine learning programs, first creating programs that play checkers.

1957 The Perceptron

The first artificial neural network was invented in 1957 by psychologist Frank Rosenblatt. Called Perceptron, it was intended to model how the human brain processes visual data and learned to recognize objects.

1963 Machines Playing Tic-Tac-Toe

Donald Michie creates a ‘machine’ consisting of 304 match boxes and beads, which uses reinforcement learning to play Tic-Tac-Toe.

1964 Study of the Visual Cortex

Hubel and Wiesel in 1981 won the Nobel price for their findings on understanding of visual cortex back in 1964th paper.

They used micro-electrodes to monitor changes of a single neuron’s action potential based on visual stimuli and they were able to record electrical activity from individual neurons in the brains of cats.

They concluded that brain neurons are organized into local receptive fields. This was the inspiration for the new class of neural architectures with local shared weights.

1967 Nearest Neighbor

The nearest neighbor algorithm was created, which is the start of basic pattern recognition. The algorithm was used to map routes.

1970 Automatic Differentiation (Backpropagation)

Seppo Linnainmaa publishes the general method for automatic differentiation (AD) of discrete connected networks of nested differentiable functions. This corresponds to the modern version of backpropagation, but is not yet named as such.

1980 Fukushima’s Neocognitron

In 1980, Fukushima published the neocognitron, first deep convolutional neural network (CNN) architecture. Fukushima proposed several supervised and unsupervised learning algorithms to train the parameters of a deep neocognitron such that it could learn internal representations of incoming data. He didn’t used backpropagation at time.

1982 Recurrent Neural Network

John Hopfield popularizes Hopfield networks, a type of recurrent neural network that can serve as content-addressable memory systems.

1985 NetTalk

A program that learns to pronounce words the same way a baby does, is developed by Terry Sejnowski.

1986 Backpropagation

Seppo Linnainmaa’s reverse mode of automatic differentiation (first applied to neural networks by Paul Werbos) is used in experiments by David Rumelhart, Geoff Hinton and Ronald J. Williams to learn internal representations

1989 Recognizing of Handwritten Zip Digit Images

Yann LeCun 1989 was able to successfully use backpropagation to recognize the handwritten digit images. Later, he did the same for the ZIP codes.

1989 Reinforcement Learning

Christopher Watkins develops Q-learning, which greatly improves the practicality and feasibility of reinforcement learning.

1989 Universal Approximation Theorem

Proven by George Cybenko in 1989 for sigmoid activation functions.

Universal approximation theorem (UAT) says that neural networks can approximate any function.

1995 Random Forest Algorithm

Tin Kam Ho publishes a paper describing random decision forests.

1995 Support-Vector Machines

Corinna Cortes and Vladimir Vapnik publish their work on support-vector machines.

1997 IBM Deep Blue Beats Kasparov

IBM’s Deep Blue beats the world champion at chess Garry Kasparov.

1997 LSTM

Sepp Hochreiter and Jürgen Schmidhuber invent long short-term memory (LSTM) recurrent neural networks, greatly improving the efficiency and practicality of recurrent neural networks.

1998 MNIST database

A team led by Yann LeCun releases the MNIST database, a dataset comprising a mix of handwritten digits from American Census Bureau employees and American high school students. The MNIST database has since become a benchmark for evaluating handwriting recognition.

2002 Torch Machine Learning Library

Torch, a software library for machine learning, is first released.

2006 The Netflix Prize Challenge

The Netflix Prize competition is launched by Netflix. The aim of the competition was to use machine learning to beat Netflix’s own recommendation software’s accuracy in predicting a user’s rating for a film given their ratings for previous films by at least 10%.[40] The prize was won in 2009.

2009 ImageNet

ImageNet is created. ImageNet is a large visual database envisioned by Fei-Fei Li from Stanford University, who realized that the best machine learning algorithms wouldn’t work well if the data didn’t reflect the real world.

2010 Kaggle Competition

Kaggle, a website that serves as a platform for machine learning competitions, is launched.

2011 Beating Humans in Jeopardy

Using a combination of machine learning, natural language processing and information retrieval techniques, IBM’s Watson beats two human champions in a Jeopardy!

2012 Alexnet

A deep convolutional neural network – AlexNet was introduced in the ImageNet Large Scale Visual Recognition Challenge (ILSVRC 2012 contest), where it set a precedent for the field of Deep Learning.

2012 Recognizing Cats on YouTube

The Google Brain team, led by Andrew Ng and Jeff Dean, create a neural network that learns to recognize cats by watching unlabeled images taken from frames of YouTube videos.

2013 Word2vec

Using Word2vec algorithm (Mikolov et al.) it was possible to learn the representation of words where each word has 50 or more latent features.

The major gain with this latent approach comparing to co-occurrence matrix approach is memory savings.

We are not forced to create co-occurrence matrices of NxN, where N is the number of distinct words, instead all we have to learn is the NxK matrix where K is usually 100 (from 50 till 1000).

Word2vec was capable of doing word arithmetics for the first time:

W['king'] - W['man'] + W['woman'] = W['queen']

2014 DeepFace

Facebook researchers publish their work on DeepFace, a system that uses neural networks that identifies faces with 97.35% accuracy. The results are an improvement of more than 27% over previous systems and rivals human performance.

2014 Sibyl

Researchers from Google detail their work on Sibyl, a proprietary platform for massively parallel machine learning used internally by Google to make predictions about user behavior and provide recommendations.

2016 Beating Humans in Go

Google’s AlphaGo program becomes the first Computer Go program to beat an unhandicapped professional human player using a combination of machine learning and tree search techniques. Later improved as AlphaGo Zero and then in 2017 generalized to Chess and more two-player games with AlphaZero.

2017 Transformers

NLP transformers are used for natural language processing (NLP) and in computer vision (CV). Like recurrent neural networks (RNNs), transformers are designed to handle sequential input data, such as natural language, for tasks such as translation and text summarization.

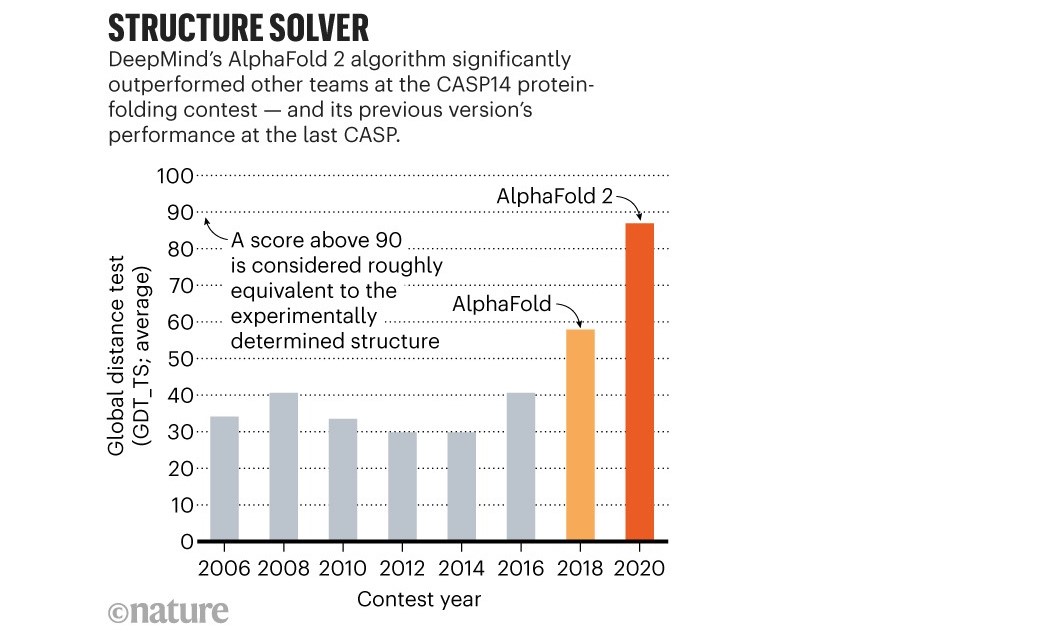

2020 AlphaFold 2

AlphaFold, outperformed around 100 other teams in a biennial protein-structure prediction challenge called CASP, short for Critical Assessment of Structure Prediction.

AlphaFold 2 global distance test

Resource: Timeline_of_machine_learning

…

tags: history & category: machine-learning