Object detection

Object detection is a machine learning technique used to identify and locate objects in images or videos. It’s important for things like self-driving cars, security cameras, medical imaging and more.

Deep learning-based methods are popular and involve using neural networks to detect objects. Usually it requires a large dataset of labeled images for training.

Popular models

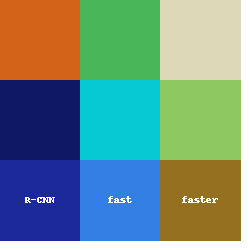

Popular deep learning-based object detection models include:

- R-CNN

- Fast R-CNN

- Faster R-CNN

- YOLO

- SSD

The Process of object detection

The process of object detection consists of three parts:

-

Region Proposal: This is the initial step where potential objects in the image are identified using algorithms like selective search, edge boxes, or region proposal networks (RPN). These methods generate a set of candidate regions that may contain an object.

-

Feature Extraction: In this step, a deep neural network is used to extract features from the image or regions of interest. These features are representations of the image or regions that can be used to differentiate between different objects.

-

Classification and Localization: The final step involves using the extracted features to classify the object and determine its location. This is typically done using a separate classification network and regression network. The classification network is used to classify the object into different categories, while the regression network is used to refine the object’s location within the image or region of interest.

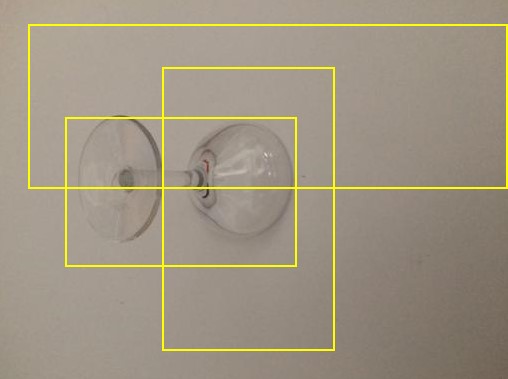

Region Proposal idea

The output of the object detection process is a set of bounding boxes around each detected object along with their corresponding class labels.

The following image shows some of the region proposals.

In reality the number of regions can exceed 2000.

R-CNN

In object detection, the feature vector and the region proposal are connected using a process called Region-Based Convolutional Neural Networks (R-CNNs).

R-CNNs combine the output of a region proposal algorithm, such as selective search or RPN, with a deep convolutional neural network (CNN) to extract features from the proposed regions. The proposed regions are then resized to a fixed size and fed into the CNN to generate a feature vector for each region.

These feature vectors are then fed into a series of fully connected layers to perform object classification and bounding box regression. The fully connected layers take the feature vector and output a class label and the coordinates of the bounding box.

R-CNNs allow for the detection of multiple objects in a single image where a fixed length feature vector is extracted from each region proposal.

Fast R-CNN

Fast R-CNN is an improvement over the earlier R-CNN algorithm, which uses a Region Proposal Network (RPN) to generate region proposals, and then extracts features from each proposed region independently. Fast R-CNN instead extracts features from the entire image using a single forward pass through a deep convolutional network, and then uses these features to classify the objects in each region proposal.

Faster R-CNN

Faster R-CNN builds upon Fast R-CNN by integrating the Region Proposal Network directly into the network architecture.

This results in an efficient system, since the RPN and the classification network share features and computation. The RPN generates a set of region proposals by sliding a set of pre-defined anchor boxes over the feature map of the entire image, and then scores and refines these proposals based on their overlap with ground-truth object boxes. The proposals are then fed into the Fast R-CNN network for classification and bounding-box regression.

Getting a Feature Vector in Faster R-CNN

In Faster R-CNN, the feature vector is extracted from the feature maps generated by the backbone network, which is typically a network like VGG, ResNet, or Inception.

The feature extraction process in Faster R-CNN involves two stages:

-

Region Proposal Network (RPN):

The RPN takes the feature maps as input and generates a set of object proposals by sliding a set of predefined anchor boxes over the feature map and predicting their objectness scores and bounding-box offsets. The output of the RPN is a set of candidate object proposals, each represented by a set of four coordinates (x, y, width, height).

-

RoI Pooling: The feature vectors are then extracted from these candidate object proposals using a process called RoI (Region of Interest) Pooling. RoI pooling extracts a fixed-length feature vector from each candidate object proposal by dividing the proposal into a fixed number of subregions and max-pooling the feature activations within each sub-region. The resulting sub-region features are concatenated into a single feature vector that represents the object proposal.

The output of the RoI pooling layer is a set of fixed-length feature vectors, each representing an object proposal. These feature vectors are then fed into a classifier and a bounding box regressor to classify the object and refine its bounding box coordinates.

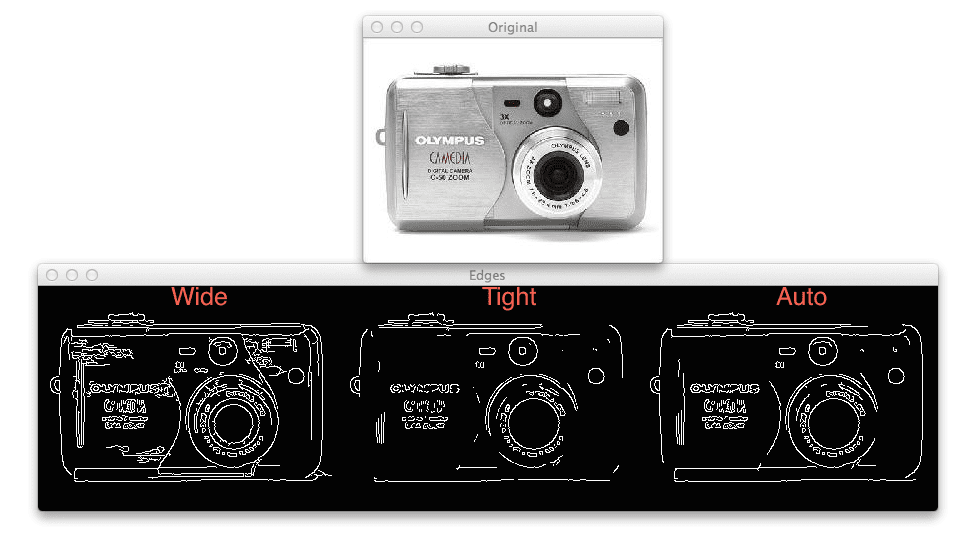

Older techniques to get a Feature Vector

Some of the old days techniques to get a FV are:

- HOG (Histogram of Oriented Graphs)

- Canny Edge Detector

- SIFT (Scale Invariant and Feature Transform)

Example: Canny edge detector with different parameter alpha

The vector should describe an object even if it is scaled or translated.

Transforming the input into a set of features is called the feature extraction, but in here we are just getting one feature per region.

R-CNN once it gets the features, each 2000+ region uses a selective search algorithm.

YOLO

YOLO (You Only Look Once) is a popular object detection algorithm that uses a deep convolutional neural network to detect objects in an image. Unlike traditional object detection methods that require multiple region proposals and classification steps, YOLO performs detection in a single forward pass of the neural network.

The YOLO algorithm works by dividing the input image into a grid of cells and predicting the bounding boxes and class probabilities for each cell. Each bounding box is represented by a set of four coordinates (x, y, width, height), and the class probabilities are predicted for each object category that the network is trained to recognize.

The YOLO algorithm consists of the following steps:

-

Image Input: The input image is passed through a deep convolutional neural network, such as DarkNet, which generates a feature map.

-

Grid Creation: The feature map is divided into a grid of cells, and each cell is responsible for detecting objects that are located within it.

-

Bounding Box Prediction: For each cell, the network predicts a fixed number of bounding boxes, along with the confidence scores that indicate the likelihood of the object’s presence inside each box. Each bounding box is also associated with a class probability score for each object category.

-

Non-Maximum Suppression: To eliminate overlapping bounding boxes, non-maximum suppression is applied to the output of the network. This involves discarding low-confidence bounding boxes that have a high overlap with other bounding boxes that have a higher confidence score.

-

Output: The final output of the network is a list of bounding boxes and their associated class probabilities.

YOLO has several advantages over traditional object detection algorithms, including speed and accuracy. Because YOLO performs detection in a single pass, it is significantly faster than other approaches that require multiple region proposals and classification steps. Additionally, YOLO is able to capture the context of an object in the image, which can improve detection accuracy.

Selective Search Algorithm

Selective Search is used for generating object proposals in the context of object detection. The basic idea behind Selective Search is to group similar pixels together into segments, which can be used as regions of interest (RoIs) for object detection.

The Selective Search algorithm works as follows:

-

Grouping: The image is segmented into different regions based on color, texture, and other low-level features. This is done using various segmentation algorithms like Felzenszwalb and Huttenlocher segmentation, which group together similar pixels.

-

Merging: The segmented regions are merged based on their similarity. Regions that are similar in color, texture, and other features are merged together to form larger regions.

-

Region Selection: The algorithm generates a hierarchy of region proposals at different scales and levels of granularity. Regions that are too small or too large are discarded, and the remaining proposals are used as candidate regions for object detection.

-

Object Detection: The candidate regions are then fed into a deep neural network, such as a Region-Based Convolutional Neural Network (R-CNN), to extract features and perform object detection.

The output of Selective Search is a set of proposed regions that may contain objects of interest, which are subsequently fed into a deep neural network for further processing. The algorithm is computationally efficient and has been shown to be effective for a wide range of object detection tasks.

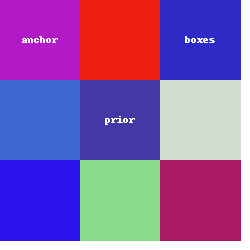

What are anchor boxes

Anchor boxes, or prior boxes use Region Proposal Networks (RPNs), such as Faster R-CNN and YOLO.

Anchor boxes are pre-defined bounding boxes that are placed at specific locations and scales over an image. These boxes act as templates for the network to predict the class label and bounding box coordinates of the objects present in the image.

During training, the network learns to adjust the location and size of the anchor boxes to better match the objects present in the image. This allows the network to detect objects of different sizes and shapes.

Using anchor boxes helps to reduce the computational complexity of object detection by reducing the number of possible object locations that the network needs to consider. It also improves the accuracy of object detection by allowing the network to better localize and classify objects in the image.

SSD

SSD (Single Shot MultiBox Detector) is another popular object detection algorithm that uses a deep convolutional neural network to detect objects in an image. Like YOLO, it is designed to be fast and efficient, and can detect multiple objects in a single pass.

The SSD algorithm works by dividing the input image into a grid of fixed-size default boxes, which are used as templates for predicting the location and class of objects within the grid. Each default box is associated with a set of class probabilities, indicating the likelihood that the object within the box belongs to each of the predefined classes.

The SSD algorithm consists of the following steps:

-

Image Input: The input image is passed through a deep convolutional neural network, such as VGGNet, which generates a feature map.

-

Feature Extraction: The feature map is then passed through a set of convolutional layers, which are responsible for detecting objects at different scales and aspect ratios.

-

Default Box Generation: For each location in the feature map, a fixed set of default boxes are generated with different aspect ratios and scales, which serve as templates for predicting the location and class of objects within the box.

-

Bounding Box Prediction: For each default box, the network predicts the offset between the box and the ground truth box, as well as the class probabilities for each predefined class.

-

Non-Maximum Suppression: Like YOLO, SSD uses non-maximum suppression to eliminate overlapping bounding boxes and retain only the most confident detections.

-

Output: The final output of the network is a list of bounding boxes and their associated class probabilities.

SSD has several advantages over traditional object detection algorithms, including its ability to detect objects at different scales and aspect ratios, and its speed and efficiency. Additionally, SSD is able to handle images with multiple objects of different classes, making it suitable for a wide range of applications, such as pedestrian detection, traffic sign recognition, and facial detection.

Similar tasks to Object Detection

There are several similar tasks to object detection not to be confused with object detection:

-

Object Localization: Object localization involves identifying the location of an object in an image, typically using bounding boxes. However, unlike object detection, it doesn’t involve identifying the class or category of the object.

-

Object Recognition: Object recognition is the task of identifying the class or category of an object in an image. Unlike object detection, it doesn’t involve localizing the object in the image.

-

Instance Segmentation: Instance segmentation is a more advanced version of object detection that involves not only detecting objects in an image but also segmenting each instance of the object with a pixel-level mask.

-

Semantic Segmentation: Semantic segmentation is the task of classifying each pixel in an image into a particular class, such as background, object, or a specific object class.

-

Object Tracking: Object tracking involves following an object in a sequence of frames, typically in a video. The goal is to determine the object’s trajectory over time and possibly identify its behavior.

These tasks share many common techniques and methods with object detection but they focus on different aspects of object recognition and image analysis.

…

tags: CNN - R-CNN & category: machine-learning